📖 In This Issue

Featured Snippets: (News & Resources)

Cover Story: Scaling Content Without Scaling Risk: Where AI Needs Guardrails

Operator of Interest: Jamie Indigo

Learn This: Natural Language Processing (NLP)

📰 Featured Snippets (News & Resources)

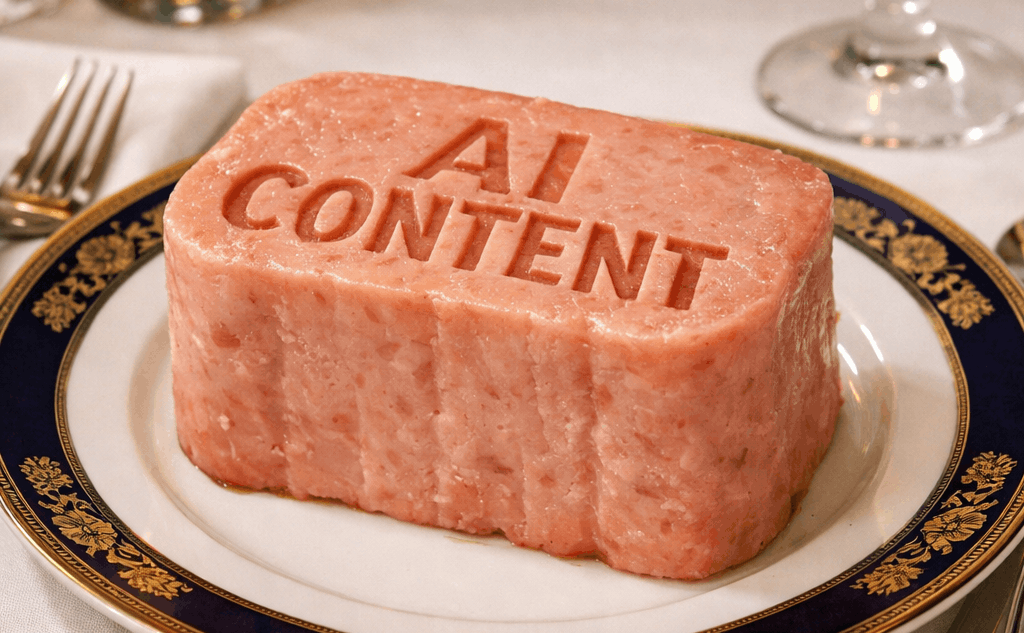

Scaling Content Without Scaling Risk: Where AI Needs Guardrails

Google’s March 2026 spam update was released (and finished rolling out) last week. This wasn’t a reminder about “AI content.” It was a reminder about what happens when publishing systems scale faster than quality systems; especially when the footprint starts to resemble what Google calls scaled content abuse: generating many pages primarily to manipulate rankings instead of helping users, “no matter how it’s created.”

These types of update’s remind us if AI can publish five times more pages this quarter, what exactly is supposed to scale with it; quality, trust, and crawlability… or just raw output?

Here’s the uncomfortable part: AI isn’t a content strategy. It’s a throughput multiplier. When production scales faster than editorial review, SME input, and internal linking discipline, the failure modes don’t show up as “bad writing.” They show up as index bloat, cannibalization, weakened topical signals, and eventually, trust loss.

The real bottleneck isn’t writing. It’s governance.

The upside is real. AI can compress the time it takes to get from blank page to workable draft. It can help teams cover long-tail queries they’ve ignored for years, refresh outdated pages faster, and keep formatting and basic on-page hygiene more consistent when templates are well-built.

The downside is also real, and it’s more operational than philosophical. AI doesn’t remove constraints. It concentrates them. Editorial review becomes the constraint because someone still has to decide what’s true, what’s defensible, and what should ship. SMEs become the constraint because “sounds right” is not the same as “is right,” especially in topics where a small error becomes a credibility problem. Information architecture and internal linking become the constraint because publishing more URLs without a plan doesn’t grow authority, it dilutes signals.

Scaling content safely is mostly scaling decisions: what to publish, where it fits, and how it’s validated. That’s governance. And governance is what breaks first when velocity becomes the goal.

Failure mode #1: Fluent pages that don’t earn trust signals

At small volume, it’s easy to hide behind fluency. At scale, fluency becomes a liability because it makes weak pages look “done.” The question teams stop asking is the one that matters most: what evidence, experience, or unique data makes this page deserve to exist?

AI is great at plausible generalities. That’s not a compliment or an insult. It’s just how the system behaves. The risk is that plausible generalities are hard to QA at volume. They don’t fail loudly. They fail quietly, by producing pages that look professional but don’t generate the signals that compound over time: citations, engagement that reflects real satisfaction, links earned because someone found something they couldn’t find elsewhere, and internal confidence that your site is saying true things in a consistent way.

The simplest guardrail I’ve seen work is treating “verification” like a first-class deliverable, not a nice-to-have. In practice, that means every article needs a clear “source of truth” behind it: internal data, SME notes, tested steps, screenshots, or citations that map to the claims being made. It also means creating an assertion log for any factual statements that could be wrong in a way that matters, especially anything YMYL-adjacent. Not because Google is watching your doc. Because your future self is going to need to defend the page when rankings shift or a stakeholder asks, “Why did we publish this?”

And it means having kill criteria. If a draft can’t pass verification quickly, it doesn’t ship. Not everything deserves to become a URL.

Fluency is cheap. Verification is the product.

Failure mode #2: Topic sprawl turns into cannibalization and weaker relevance

Publishing at speed makes it easy to confuse “more coverage” with “more clarity.” The problem is intent overlap. Two pages can be perfectly written and still sabotage each other if they target the same job-to-be-done.

At scale, teams accidentally publish overlapping pages aimed at the same intent because the workflow starts with, “We need more keywords covered,” instead of, “We need one clean answer per intent.” Rankings wobble because Google has to choose which page to rank and you keep giving it two half-answers. Internal competition rises because you’ve built your own rivals. Crawl paths get noisy because you’ve created more near-duplicates to discover, recrawl, and re-evaluate.

The fix isn’t complicated, but it does require discipline before writing starts. Define intent clusters first, and commit to one page per primary intent. Do SERP overlap checks before publishing, not after traffic drops. Maintain query-to-URL mapping so that every new draft has an assigned “home,” and every assigned home has consolidation rules when you inevitably change your mind.

More pages can mean fewer signals per page. The math is not kind here. If you spread attention, links, and internal authority across a larger set of overlapping URLs, you haven’t increased authority. You’ve redistributed it.

Failure mode #3: Internal linking debt is the silent killer

AI makes it easy to create pages. It does not automatically create a coherent site graph.

This is where a lot of “AI content programs” quietly fail. They ship dozens or hundreds of new URLs, and the linking model is either nonexistent or generic. Pages launch orphaned, mis-anchored, or connected with templated anchors that don’t reflect real relationships. Over time, the site stops behaving like an organized knowledge system and starts behaving like a pile of documents.

Internal links aren’t decoration. They’re infrastructure. They’re how you communicate topical hierarchy, relationships, and priorities at scale. Without them, you’re relying on Google to infer structure from a site that isn’t consistently structured.

One operational guardrail that scales well is treating linking as a minimum viable architecture requirement, not an optional polish step. Each new page should be connected upward to a relevant hub, laterally to a handful of true siblings (not “related posts” roulette), and downward to supporting content where it makes sense. Anchors should read like a human wrote them because they describe a real destination, not because you’re trying to jam keywords into templates.

Then you need pruning. Not the dramatic kind. The boring kind. Quarterly link pruning and consolidation so you remove pathways into thin pages, redirect what no longer deserves to exist, and tighten the parts of your graph that matter most. AI increases publishing capacity. It also increases the amount of maintenance your site requires to remain coherent.

Failure mode #4: Template scaling becomes scaled content abuse

This is the part most teams want to skip, because it sounds like “Google drama.” It’s not drama. It’s enforcement.

Google’s spam policies explicitly define “scaled content abuse” as generating many pages primarily to manipulate rankings rather than help users, typically producing large amounts of unoriginal content with little to no value, regardless of how it’s created. That last clause matters: “no matter how it’s created.” This is not an “AI content” policy. It’s a low-value-at-scale policy.

Google’s own guidance on using generative AI is even more direct: using generative AI tools to generate many pages without adding value for users may violate the scaled content abuse policy.

The risk here isn’t that your pages are “AI.” The risk is that your process produces industrialized low-value content patterns: near-duplicate intent, thin differentiation, shallow value, and a site-wide footprint that looks like you’re trying to win by volume instead of usefulness.

Even if each page is “fine,” scaling patterns are visible. If your templates produce lots of pages that rhyme with each other, your site starts to look less like a library and more like a factory.

The guardrails are boring, which is why they work. Ship fewer templates and force more differentiation. That differentiation doesn’t have to be fancy. It can be original examples, comparisons that reflect your product reality, decision tools, internal data, screenshots, and plain-language explanations of edge cases your customers actually hit.

Build value checks into the workflow. What does this page add that your existing pages don’t? What does it add that the SERP doesn’t? If you can’t answer that in a sentence, you’re probably publishing for output, not outcomes.

And create a scalable review layer. You do not need an SME to bless every utility page. You do need sampling audits, escalation rules, and auto-reject conditions that prevent obviously weak pages from becoming permanent URLs.

If your process can’t explain why each page deserves to exist, Google doesn’t have to either.

A pragmatic control model for scaling with guardrails

There’s a balanced truth here that’s worth stating plainly.

Yes, you can scale responsibly if you treat AI as a drafting layer inside a governed system. No, you can’t skip the slow parts: SME input, editing, linking, pruning without paying for it later.

The most practical model I’ve seen is tiering, because it matches effort to risk.

Your highest-stakes pages, the ones that drive revenue, signups, pipeline, or brand trust, need heavy oversight. They need real SME involvement, real editorial scrutiny, and intentional link architecture. Supporting guides can run with strong editorial standards and selective SME sampling, because the downside is smaller and the benefit is breadth. Utility pages can be templated, but only if the value criteria are strict and pruning is aggressive. Utility content is where most programs accidentally manufacture risk, because it’s where “we can publish a lot” quietly becomes “we published a lot.”

On top of tiering, you need quality gates that must pass before shipping. Not word count gates. Substance gates. Is the intent unique on your site? Is there evidence behind claims that matter? Does the page meet internal linking minimums that reflect your site structure? Does it clear a thinness threshold based on usefulness, not length?

This is what “scaling quality” actually looks like: scaling confidence, not just pages.

What to tell your boss/client now

AI content velocity creates latent risk; the kind that looks like success until it doesn’t.

If you want a leadership-friendly framing that stays honest, it’s this: we can increase output, but output without governance turns into debt. That debt shows up later as crawl waste, ranking instability, consolidation work, and pages you have to defend when someone asks why they exist.

The practical promise is also simple: if we invest in guardrails now: editorial systems, SME workflows, internal linking discipline, and a pruning habit, we can grow output without quietly increasing spam exposure or eroding site quality signals.

AI multiplies whatever you already are. Guardrails decide whether that’s quality, or spam.

👤 Operator of Interest: Jamie Indigo

Known for: Technical SEO & JavaScript Legend

Works at: Cox Automotive Inc

(formerly at DeepCrawl, MOZ, eBay, and others)

Follow: LinkedIn

Learn This:

Natural Language Processing (NLP): The field of computer science focused on understanding human language.

One more thing: AI is only as good as it’s operator, and if you are reading this newsletter, you are better than most!

Till next time,

Joe Hall

PS: Let me know what you think of this issue, or anything else here: [email protected]